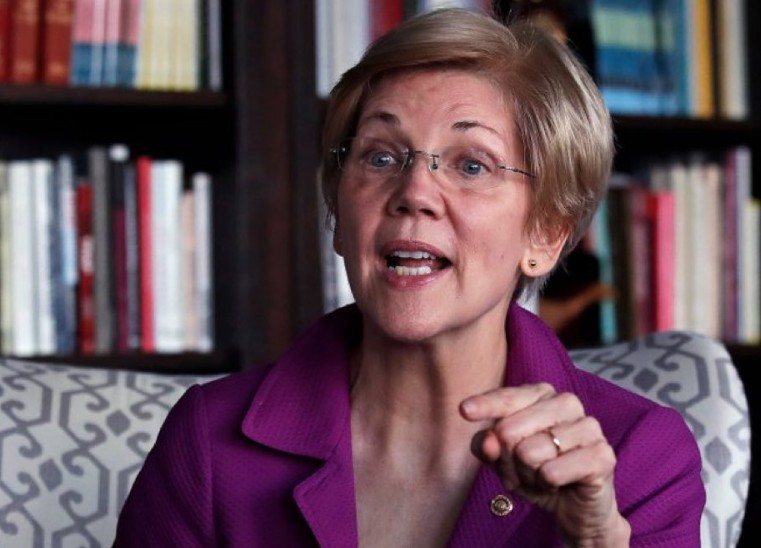

US Senator Elizabeth Warren has accused President Donald Trump and Defence Secretary Pete Hegseth of attempting to “extort” Anthropic AI into removing safety guardrails from its artificial intelligence systems.

The dispute erupted on Friday after Trump ordered federal agencies to immediately stop using Anthropic AI technology.

At the centre of the row is a $200 million (£157 million approx.) Pentagon contract signed last summer, and a clash over whether the company must allow its AI model, Claude, to be used for fully autonomous weapons and large-scale surveillance.

The issue matters because it touches on national security, corporate independence and the global debate over how far governments should push artificial intelligence into military decision-making.

It also carries implications for the UK, given Britain’s close defence and intelligence ties with Washington.

What triggered the dispute over Anthropic AI guardrails?

Elizabeth Warren, the ranking member of the Senate Banking, Housing and Urban Affairs Committee, accused Donald Trump and Pete Hegseth of pressuring Anthropic to weaken its internal safeguards.

In a statement released Friday, Warren said the administration sought to remove: “Common sense guardrails that protect Americans from mass surveillance and fully autonomous weapons, with no human decision-makers, that can kill with impunity.”

She added: “Congress needs to put in place restrictions to stop this Administration from using bipartisan national security authorities to bully and punish American companies that won’t advance their authoritarian agenda.”

Anthropic signed a $200 million contract with the US Department of Defence last summer. However, negotiations reportedly stalled because the company’s terms of service prevent Claude from being used in fully autonomous lethal weapons systems or broad domestic surveillance.

Why did Trump order federal agencies to stop using Anthropic AI?

On Friday, Trump directed federal agencies to “immediately cease” using Anthropic AI technology.

The US Department of Defence also labelled the company a “supply chain risk” to national security, a classification that can prevent military contractors and partners from working with the firm.

Trump accused the company of attempting to “STRONG-ARM the Department of War” and forcing it to follow corporate rules instead of federal authority.

According to statements released during the dispute, the Pentagon’s final offer requested access to Claude for “all lawful purposes.” Anthropic responded that negotiations had made “virtually no progress.”

Anthropic CEO Dario Amodei said the company could not “in good conscience” accept the Pentagon’s terms.

What are AI guardrails?

AI guardrails are technical restrictions and policy limits embedded into artificial intelligence systems. Developers use them to prevent harmful or unethical uses.

In simple terms, they act as built-in boundaries. For military systems, guardrails often ensure:

- A human remains in control of lethal decisions

- AI cannot independently select and attack targets

- Systems cannot conduct mass domestic surveillance

Supporters argue these safeguards reduce the risk of unintended harm, abuse of power or escalation in conflict. Critics say strict limits could restrict national defence capabilities.

The dispute between the Pentagon and Anthropic reflects a wider global debate. Governments want cutting-edge AI tools. Tech firms want to limit how their models get used, especially in life-and-death situations.

How unusual is the “supply chain risk” label?

Anthropic described the classification as “Legally unsound” and a “dangerous precedent.”

The company added that designating it as a supply chain risk would be unprecedented for an American firm. Historically, such labels have targeted foreign entities viewed as national security threats.

If upheld, the designation could restrict Anthropic’s access to government contracts and defence partnerships. It could also send a strong message to other AI developers negotiating with US authorities.

What does this mean?

Although the conflict is unfolding in Washington, it has potential consequences for Britain.

The UK works closely with the US on defence technology, intelligence sharing and AI research. If US authorities push firms to relax AI guardrails for military use, British policymakers may face similar questions.

The UK Government has already positioned itself as a leader in AI safety, hosting the AI Safety Summit at Bletchley Park in 2023.

British regulators continue to assess how AI systems should operate within defence, policing and surveillance frameworks.

Any shift in US policy could influence:

- UK defence procurement decisions

- Standards applied to British AI firms

- NATO-wide approaches to autonomous weapons